I am not my brain

- the map is not the territory -

by Joel A. Wendt

We all know this about maps. You can have a very detailed map of your home town, or even pictures from Google Earth, and the experience of that map or those pictures is not what we experience when we walk through the actual town - the actual territory.

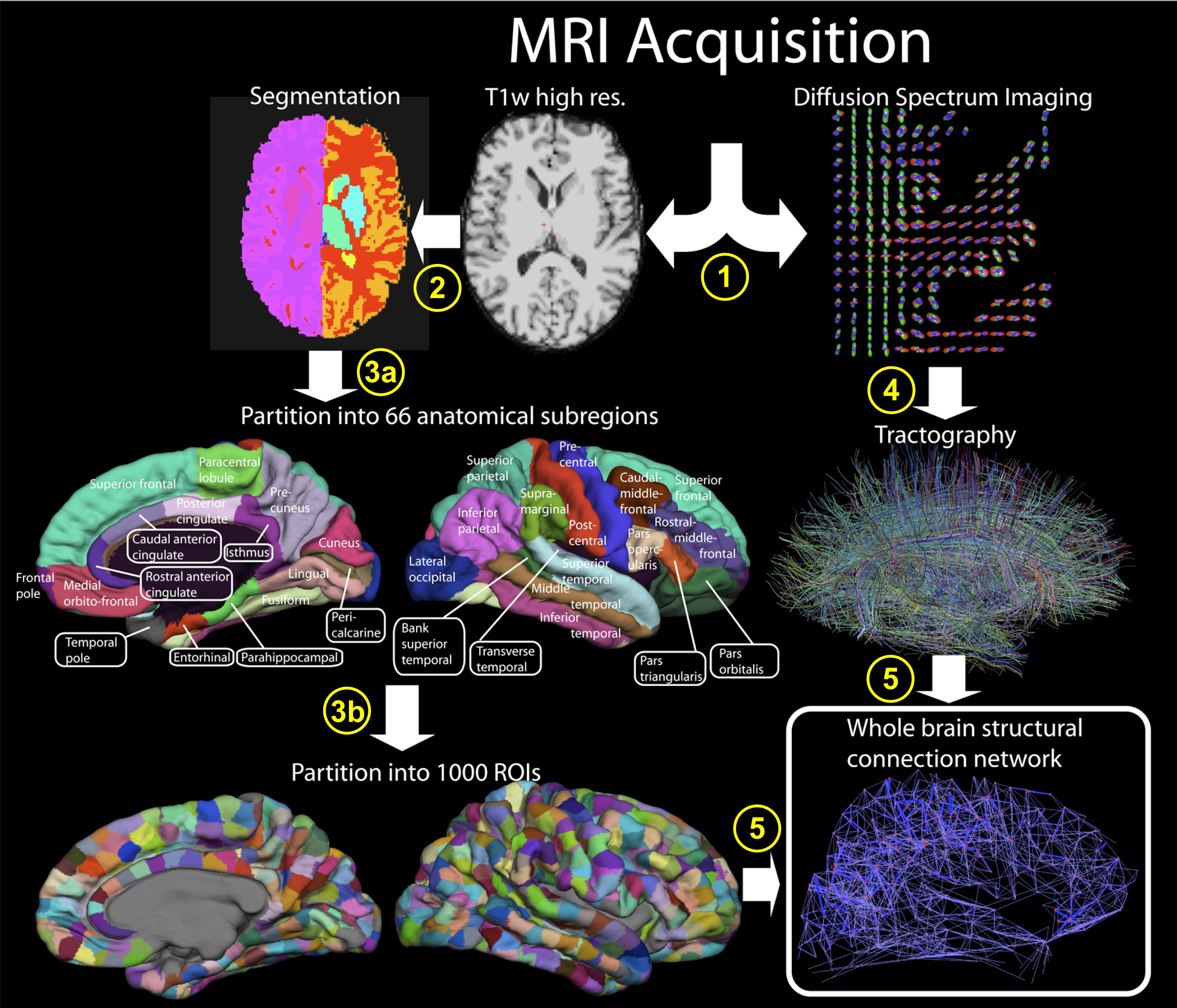

This ordinary common sense truth points to a major flaw in the thinking applied by brain-scientists to what they believe they come to know through their studies. Regardless of how intricate their maps, how three dimensional, how colored, how full of data and time-stamped, brain-studies only give us maps to the mind. The real territory of the mind is only available to us as an experience, directly in our own consciousness, and directly to what ordinary consciousness conceives of as our inmost “self”.

The central problem this essay addresses is not with the detailed arts and the hard work of the brain-scientists, but with the fundamental presumed logical assertions, born in a misdirected thinking/cognition, that fails to give us real explanations to what all that research is believed to ultimately mean. We will not find the true human being in the idea that we are just an inadvertent consequence of a random process, that has accidentally created a meat-organ with consciousness, however intricate and complex.

No doubt this research can and will provide advances in human knowledge of the nervous system. In fact, recent capacities developed to enable people with missing limbs to use thought to drive the action of the limb is not just a wonderment, but perhaps a kind of proof, which may lead to the opposite conclusion than the one held by those who currently say we have no free will.

The basic Riddle remains: Can these views of the outside (the maps to the brain) ...

Our languages are littered with expressions about this relationship between self and mind and experience. All personal pronouns used in any sentence have no meaning if there is not an experienced self, which lives within the actual territory of consciousness, making the relevant judgments. The same with most of the “rules” of grammar. Were we to accept the asserted implications of some of the brain-scientists, we are going to have to throw away great portions of thousands of years of language development.

... logically give us real knowledge of what lives in the "inside-territory" of that dancing child - that whole human being?

~~~~~~~~~~

The brain-scientist does not even have a way to “see” consciousness or thoughts or mental pictures or imaginative pictures or feelings or any of the thousands upon thousands of aspects of the actual rich and wise inner territory we all daily experience. The brain-scientists only sees surfaces, or “outsides”, which he "maps", while only ordinary consciousness itself sees the “inside” (the territory). And, these reference to an inside and an outside hardly touches the reality of experience, which generally is known as a whole - inside and outside implicitly intimately linked in the total field of consciousness.

Yet, many of the brain-scientists are trying to tell us that consciousness - the territory - is rooted in the physical brain, that there is no self, and that there is no free will. Why?

Sadly, to answer the “why” question, we can’t trust the brain-studies which brain-scientist likes to use to support his conclusions. Then arises an additional question: why can’t we trust that work? The answer to these questions is not so simple, but nonetheless all the more crucial for being a bit complicated.

Everyone alive today is born into a culture and a language. Each individual culture is complicated. The religions are all different, and the historical paths through which that particular language arose are also complex.

Scientists like to act as if they are creating universal knowledge. A valuable effort if they were disciplined enough, but the historical facts of the development of modern science reveals that at far too many crucial steps it progressed by eliminating from its considerations aspects of existence that could not, in general, be counted or otherwise measured. Science in its youth (it can still mature, should its priests so choose), took things apart, and liked to count and measure these many parts, finding in mathematical relationships some logical surety that would otherwise seem lacking, if, instead of only quantities, this useful discipline tried to also be scientific about qualities.

For example, gravity, as a number relationship between masses is fine, but when we try to be scientific about what it “feels” like to fall from a great height, we don’t know what to do. Weightlessness, sky diving, bungee jumping - to have knowledge about that experience, we have to have that experience. Keep in "mind" that the effect of many qualitative experiences is to change us. In the debate between Nature and Nurture hides a very special question. The human being becomes something out of those complex and rich life experiences of their individual biography that can never be measured, counted or turned into a number.

The whole history of modern science since the 1500‘s is about analysis and counting and measuring (and similar eliminations of the qualities of experience), such that by the early 20th Century Sir Arthur Eddington was to say: “We are on a path of knowing more and more about less and less.” The details (quantities) grow exponentially, while the understanding of the whole (qualities) becomes lost in the avalanche noise of more and more theories, requiring greater and more costly experimental apparatuses. This has gone so far as to produce a claim that the physicists studying at the super-collider in Cerne recently discovered the theoretically imagined "god particle". Materialistic science, having banished God from its considerations (no qualities), now wants to borrow that name/word to describe some extremely tiny "thing", which will illuminate all of existence in some fanciful way.

This confusion (quantities without qualities) then (having arisen over centuries) becomes embedded in the meanings of the very language we use, such that when a modern brain-scientist is born, and then educated, the language paradigm by which he is initiated into his discipline already bears within it decades and more of numerous unproven assumptions and logical errors of thought. These then have come to live in the very meaning of many individual words.

Take for example, the words: subjective and objective. The brain-scientist strives for objectivity, and shies away from his own mind, because of its assumed subjectivity. Unaware perhaps that about 200 years ago those two words meant the exact opposite of how we use them today.

Of course, few in science like to hear such points of view, which is why in recent years a debate has broken out between leading scientists and philosophers, where these scientists want to put forward the idea that they no longer need philosophy at all. A lot of the basics of this problem were exposed in the 1950's by Thomas Kuhn’s little book: The Structure of Scientific Revolutions. Kuhn wrote this book, when as a beginning scientist in physics, he decided to look to the history of the themes about which he wanted to do his Ph.D. He then discovered that the histories of science he had been taught were false. The reality is that science travels a kind of zig zag path, encountering dead ends and false conclusions, before finding a way to move forward again. The problem was that the history of science he had been taught was written as if the path was straight, and there were no mistakes that later needed being corrected.

We still get taught today this made-up straight line history, which is done as if to suggest that the scientific method always leads to surety of fact. We are taught of scientific theories as if they were inevitable, and no other conclusions were possible. But that has never happened in the the history of science, ever. The result is a kind of system of religious-like "beliefs", such that if a “leading scientist” says it, the general public is likely to believe it is true. Sadly, there are a lot of mistakes still out there, haunting the world-view many wish us to believe.

Some of these false “paradigms” involve the brain-sciences, and many of these false paradigms have become hidden in the words we use everyday. As I suggested above, the problem is complex, and more examples will be given as we proceed.

~~~~~~~~~

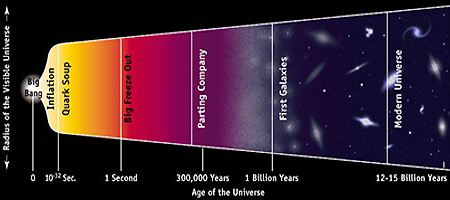

Brain-studies require (and exist in) a general contextual background, and it is important to recognize that this background can not really be framed in the way many believe. Take, for example, two main theories commonly assumed to be completely true (in the popular mind): Darwinian evolution and Big Bang cosmology. In the case of each of these, a philosophically trained (logically rigorous) mind finds many difficulties. Self-conscious thinking and thought illuminates important unanswered questions.

A significant essay on this problem, as regarding Darwinian evolution (such as survival of the fittest), can be found here: Dogma and Doubt, by Ronald Brady. In this essay Brady explains precisely why Darwinian evolutionary theory fails as a workable/testable scientific theory, due to its lack of philosophical/logical rigor. The main issue has to do with the idea of speciation, which evolutionary theory attributes to natural selection. Somehow in the darkness of a past we can never directly empirically observe, an incredible variety of species came into existence, one species being formed out of the previous. The amoeba becomes the tadpole becomes the lizard becomes the rat becomes the monkey becomes the man.

Almost every necessary transitional species is missing from the geological record. The gaps are huge, and our failure to note this problem carefully and fully is a serious weakness for the theory. What Brady shows is that, as presently formulated, the idea of natural selection can’t be tested. If it can’t be tested, it can’t be falsified; and, if it can’t be falsified then it is useless as a theory from a philosophy of science point of view.

The geographical record is supposed to consist of several very long periods of evolutionary stasis - what is there at the beginning of a particular period, such as the Cambrian, is what is there at the end. In this same version of the theory, the gap between one long period and another long period is much smaller in time, and filled with tiny micro-organisms not nearly as complicated as those in the longer periods of stasis. In order to "explain" this, the "theory" asserts that extinction events took place, completely eliminating almost all of the previous flora and fauna. The name given to this is: punctuated equilibrium. A better name would be metamorphosis, and the reader of this can find that discussion in my 2010 book: The Art of God: an actual theory of Everything. A fully awake thinking learns that the whole of the geographic record is an ordered sequence of successive instances of metamorphosis, as a consequence of the activity over eons by the living Being of the Earth.

And, in spite of this failure, the theory has become so universally treated as factual, that it has became a dogma that can’t be questioned, and which is used everywhere in biology to explain all kinds of phenomena, without anyone ever looking behind the curtain and seeing that the wizard has no clothes. Anyone wanting to refute what is said here in this essay, must become familiar in all its details with Brady’s work, so as to realize precisely what the rules of authentic philosophic inquiry require, in order to produce, at the least, a workable/testable theory.

Science is full of such assumptions, which start out as theories, only to become unquestioned religious-like dogmas, and from there enter into our common understanding of the world as if they were scientific fact, when they are not by any means able to stand before the disciplines regarding knowledge and truth, that is the responsibility of philosophy (a responsibility many academic philosophers do not fulfill).

Big Bang cosmology, as a theory, is of a whole other order than Darwinian evolution. In biology, the material is much more accessible. Prior ages have left fossils and bones, and the geographic record (the strata) can be touched and taken to a laboratory to be tested. The starry world is very far away, and the actual physical phenomena, which constitutes the evidence, is only light vibrations of various frequencies (from infra red to ultra violet), including all kinds of oddities such as “cosmic rays”. We capture their effects via such inventions as photographic-like instruments and large antenna arrays, but unlike biological artifacts we can’t go into the field and pick up star-stuff, and take it to the laboratory in order to empirical confirm our many hypotheses.

Even the idea of the nature of “space” itself needs to be reviewed, which I have done previously in my essay: The Misconception of Cosmic Space As Appears In the Ideas of Modern Astronomy - and as contained in the understandably limited thinking embodied in the conceptions of the nature of parallax and redshift. The current theory has probably less than 1% empirical facts as aspects of its truthfulness. Most of it is made-up ideas, as various scientists tried to guess at what happened, in ways whose main virtue was that they denied a religious creation explanation. The reality of the starry world is that "space" itself at cosmic distances is no longer three-dimension, but two dimensional. The sphere at infinity becomes a plane, in accord with the rules of projective geometry. Rudolf Steiner: "Think on it: how the point becomes a sphere and yet remains itself. Hast thou understood how the infinite sphere may be only a point, and then come again, for then the Infinite will shine forth for thee in the finite."

Presently the Big Bang theory is under a lot of stress even among astrophysicists, who are still uncertain as to the implications of quantum experiments, super-collider data, string theory, and that holy grail of physics: “a theory of everything”, which is hoped to explain the relationship of gravity, electro-magnetism, and other forces seemingly involved in the organism of the smallest particles. Even that theory requires us to believe that everything is made up of parts, something that we don't actually observe in Nature. Recent explanatory "inventions" such as dark matter and dark energy, may, like the mutation/monster idea used at one time to explain speciation in evolutionary theory, make up for the anomalous aspects of the evidence, but anyone who uses the internet to get into the specifics will find many arguments hidden away in various journals and other places where scientists and philosophers disagree out of sight of such main stream fairy tales as in the recent TV series Cosmos series, staring Neil DeGrasse Tyson. Here is a statement on some of the cosmological problems:

Redshift in the light from galaxies led to the belief that the universe is expanding, and this belief has persisted for 80 years. But modern observational evidence, especially from NASA European Space Agency space telescopes and satellites, has clouded the picture and raised many doubts. In 2004, an open letter was published in New Scientist magazine, and has since been signed by over 500 endorsers. It begins: “The big bang today relies on a growing number of hypothetical entities, things that we have never observed-- inflation, dark matter and dark energy are the most prominent examples. Without them, there would be a fatal contradiction between the observations made by astronomers and the predictions of the big bang theory. In no other field of physics would this continual recourse to new hypothetical objects be accepted as a way of bridging the gap between theory and observation. It would, at the least, raise serious questions about the validity of the underlying theory.” [the original source page for this is no longer available: (http://cosmologystatement.org)]A point worth remembering: The reason Darwinian evolution and the Big Bang are both called "theories" is because we don't have the capacity to go into even the near evolutionary past, and certainly not the deep past of the cosmos, and actually see, hear and measure what happened. We lack the ability to be empirical about our past - we can't go there. Seeing is believing is the cliche, and in this case we never see this past at all. The minds of scientists imagine this past into existence, and then sell us their most popular tales as if they were facts.

So we take the small bits of aspects of our present, and then weave stories about the past we cannot test, and top that off by forgetting the huge number of assumptions involved in making up these stories. Check out this for part of the underlying issues: Uniformitarnianism. You could not find a bigger assumption about the universe and nature than this one, which assumes all known facts today, about what are called constants, remain unchanged into the most extreme past and conditions of space and time. Gravity is assumed to be constant throughout all time, etc. Any serious observation of Nature reveals the opposite: Everything changes, nothing is fixed, which observation doesn't even deal with the fact that we cannot go into the past and empirically prove that these constants in fact are constant, or that the radiation used in carbon dating existed in this same unknowable past. These supposed constant forever constants and radiation dating ideas cannot be empirically proved. They are just assumed to be true.

One honest way to describe this situation is to remember that for about 400 years physics has been too busy taking things apart, eventually in some cases just by smashing them together to see what happens, meanwhile leaving aside from consideration anything that can’t be counted or measured. The development of physics preceded the development of biology, which itself eventually led to brain-studies. Both fields are littered with unprovable assumptions. By the time the basic riddles, about consciousness and the nature of mind, got sufficient scientific attention, and given that only that which could be counted and measured was allowed any scientific meaning, students of consciousness, in order to act as if they were being scientific, had to perform experiments that produced quantifiable data.

Here is an article about a physicist who actually pays attention to philosophy: Physicist George Ellis Knocks Physicists for Knocking Philosophy, Falsification, Free Will

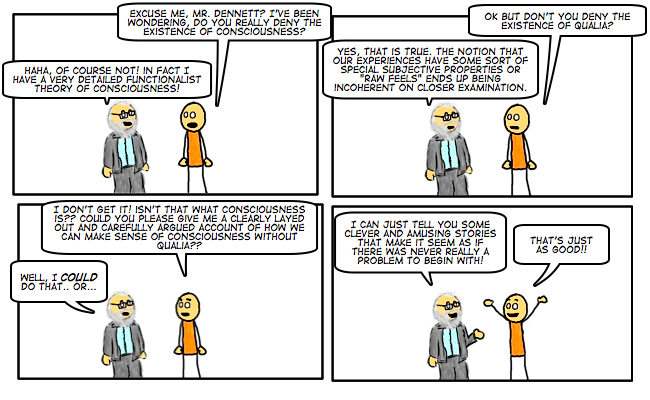

Yet, of all the objects possible for scientific study, what realm of life could be more filled with qualitative phenomena than consciousness. Philosophers even coined a term for these phenomena, calling them: qualia.

From Wikipedia

Qualia is a term used in philosophy to refer to individual instances of subjective, conscious experience. The term derives from the Latin adverb quālis, meaning “what sort” or “what kind”. Examples of qualia are the pain of a headache, the taste of wine, or the perceived redness of an evening sky.

Daniel Dennett (b. 1942), American philosopher and cognitive scientist, writes that qualia is “an unfamiliar term for something that could not be more familiar to each of us: the ways things seem to us."

Erwin Schrödinger (1887–1961), the famous physicist, had this counter-materialist take: The sensation of color cannot be accounted for by the physicist’s objective picture of light-waves. Could the physiologist account for it, if he had fuller knowledge than he has of the processes in the retina and the nervous processes set up by them in the optical nerve bundles and in the brain? I do not think so.

The importance of qualia in philosophy of mind comes largely from the fact that it is seen as posing a fundamental problem for materialist explanations of the mind-body problem. Much of the debate over their importance hinges on the definition of the term that is used, as various philosophers emphasize or deny the existence of certain features of qualia. As such, the nature and existence of qualia are controversial.

The problem is that the Nature we have been studying doesn’t come in pieces, but in wholes and systems. The logical problems can be kind of silly, once they are pointed out. So, for example, a physicist might describe a tree as made up of very small objects which we cannot see. Meanwhile, over in biology, evolution is described as a completely random and accidental process which evolved eyes for the purpose of survival, but oh, by the way, no need for this survival goal to include seeing atoms. To the imaginary hand behind natural selection, the seeing of cosmic rays, or protons is not much use. This leads to a really interesting question from a philosophical point of view, that does’t have an answer (yet). We need to see the tree, so we see the tree, and the tree is very much there whether or not we say it is made up of very small, invisible to the senses, parts. For some details, read my essay on parts and wholes and analysis and synthesis in the thinking about Nature, see: Electricity and the Spirit in Nature.

~~~~~~~~~

A big part of the problem regarding the brain has to do with the experience we name by the word: consciousness. For all that the brain-students study, they have not even gotten close to explaining what consciousness is, or how the matter of which the brain is made produces it. In point of fact, this basic un-answered question is so crucial, that it is mostly resolved by ignoring it. The central mystery/riddle has been replaced with the assumption that the brain miraculously produces consciousness, thoughts, and all other mental phenomena, of which there is a considerable variety. But as yet there is no model for how this wonder is accomplished, and certainly no testable theory for its daily miracle.

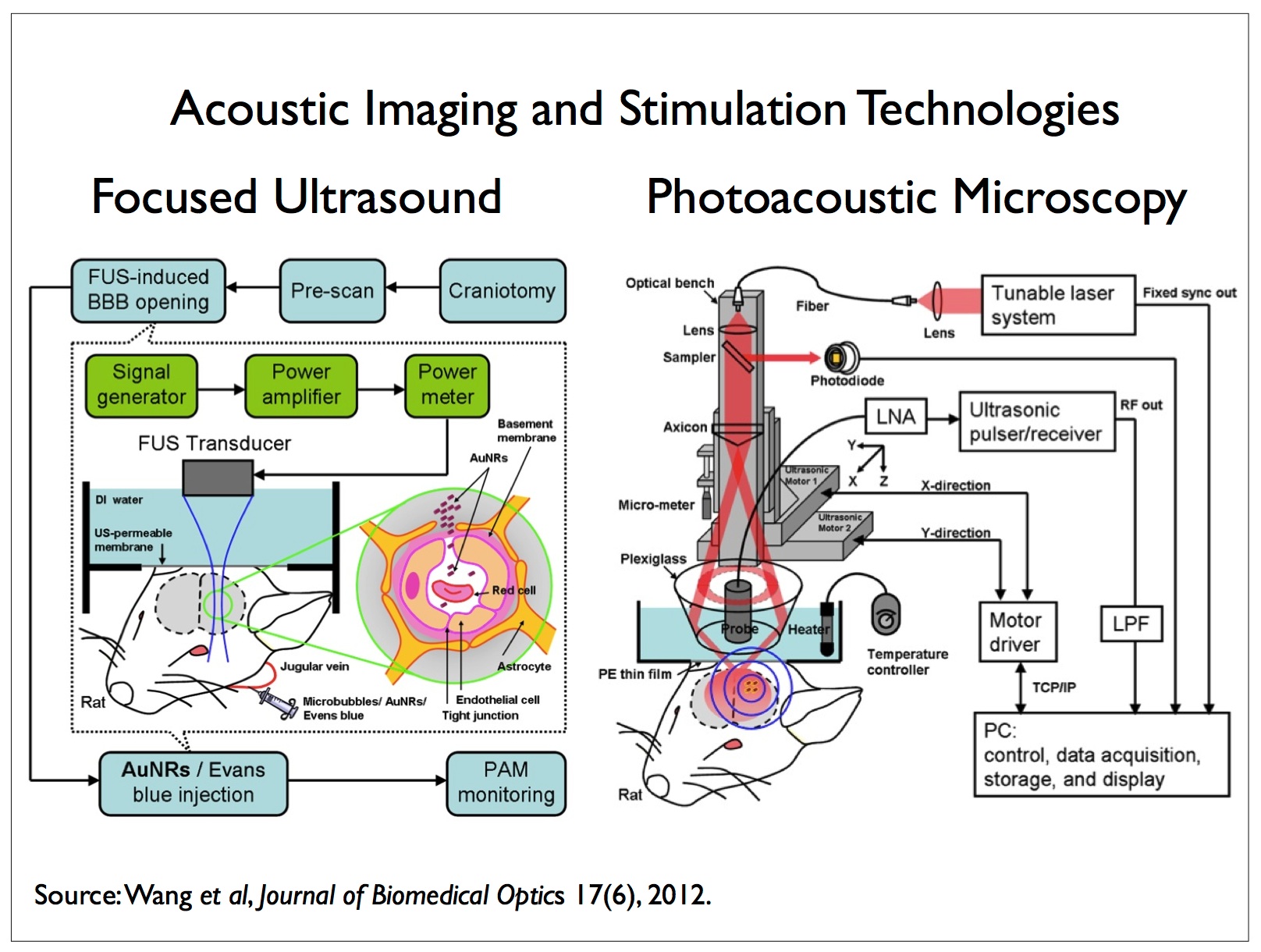

Just as with the dogma regarding speciation, that is the central problem in evolutionary biology, the brain-student lives with an unproven dogma that consciousness is produced by the material organ we call the brain. The brain experiments are many, but have a common problem because present day scientific disciplines requires simple kinds of quantifiable studies. So the brain-scientist flashes some pictures at people's eyes, seems to watch what happens in the brain with some kind of instrument, and then asserts certain logical conclusions must follow.

The sad fact is that the brain scientist is not watching what happens in the brain at all. He has no clue what is happening there. The very best data the instruments provide is that the brain is doing something in certain previously mapped regions. What it is doing is entirely a made up theoretical construct, for which no test has yet to be devised. It is just assumed that the brain produces/contains consciousness in the way all of us recognize ourselves as being conscious. But that “consciousness” is not seen at all.

We know we can interrupt consciousness. We can put the mind to sleep. We can poke an electrode into a region of the brain and stimulate a memory. We can study people where the links between the hemispheres are severed. We can study people where all kinds of brain injuries limit their speech or the other senses. We just can’t directly observe the central acts of consciousness themselves. Everything is inferred. And, all inferences are based on the assumption that the brain must produce this “consciousness”, which is an “object” that no instrument sees, ever.

Except for one. There is one instrument which sees consciousness directly. Our own mind. But the brain-student does not study his own presumed subjective conscious experience. The one tool he has for conscious awareness of consciousness itself is ignored. Instead the brain-scientist uses instruments which notice electrical and chemical activity in brain cells in various regions, and with his own mind he forges what he believes are logical links about what that means. Then he has a press conference and announces there is no free will, and his studies have proved it.

Even the fields of psychology and psychiatry tend to involve the study of the conscious minds of others, from the outside. Questions are asked. Polls are taken. Dialogue arises. The student of the psyche, who tries to heal injuries to that psyche, does so by asking questions; and, if he is any good at that discipline, the main thing the healer does is get the subject to become more awake inwardly to his own life of mind. Instead of telling the subject what to think, the healer of the psyche tries to induce that subject to think more clearly about their issues themselves. This kind of healing of the emotional complexities of the mind makes no logical sense at all unless the individual being helped is free to make changes in his own psychology. Otherwise verbally based healing arts are meaningless. If there is no free will, and consciousness is the product of a large ball of meat, then all that therapy is not only pointless, it never should succeed.

The brain-scientist can’t do that. He can’t fix the psyche. Perhaps he can fix some dysfunction in the matter of the cells - the neurons - but appealing to the self-consciousness of the individual with the problematic psyche is not in his repertoire.

Now there is a kind of study, which deals with consciousness in a very exact way, but at the same time somewhat indirectly. This is the science of philology - or the study of the changes in the meaning of words over time. Just about everyone that is not confused by the brain-studies knows that language is a central element in the act of thinking. We do an inner wording which we label: discursive thinking. We talk to ourselves in our own mind - we ruminate, we obsess, we worry. And, thinking is one of the main actions that takes place in consciousness. Not only that, but by thinking in words, we also engage (or become aware of) concepts and what we call: Ideas. Our languages would not have these words: concepts and ideas, if there was not some degree of inwardly experienced reality that observed such mental phenomena. At the same time, we need to never forget that a brain-scan does not see either concepts or ideas. Only the "I" of the own mind perceives such ephemeral phenomena.

For example, even in brain-studies the scientist has to obtain the participation of the individual whose brain he wants to watch with his scientific instruments. The brain-scientist still has to use language to explain the meaning of the study to the object of his study, and then stimulates that persons mind. After which he then asks that person to use language to report what they observed themselves, in their own consciousness. So while the brain-scientist is making his studies, that process is entirely dependent on what the object of the study inwardly observes, how that subject choose to act or cooperate, and both aspects of the experiment are dependent on language - on the meaning of words - in order to have any relevant discussion. And, as philologists know well, "meaning" is a much more problematic matter than we ordinarily realize.

These facts are generally left out of the brain-scientists considerations. He assumes that he can divine the meaning of his experiments solely from an inferred relationship between what his instruments see as various kinds of light and warmth and chemical activity in the brain, and the imaginary “consciousness” whose actions he believes he is thereby observing. This is not logically or philosophically sound.

Meanwhile, the acquisition of language itself, in the growing and developing child also remains a mystery. Lots of theories, but no means yet to perform experiments that actually “see” this crucial development in human nature. Why can’t we see it? Because it has this odd quality which is that consciousness is invisible to any outside observer. We can see (experience) our own, but any thoughts we have about the consciousness of another human being can only be inferred - they are not empirical or otherwise observed. That fact alone has not been given enough attention by the brain-scientists, which is a crucial lack from the point of view of the natural rules underlying any philosophically pragmatic and logical approach to knowledge.

~~~~~~~~~

Let us, for a moment, recapitulate our situation, perhaps adding a few helpful nuances.

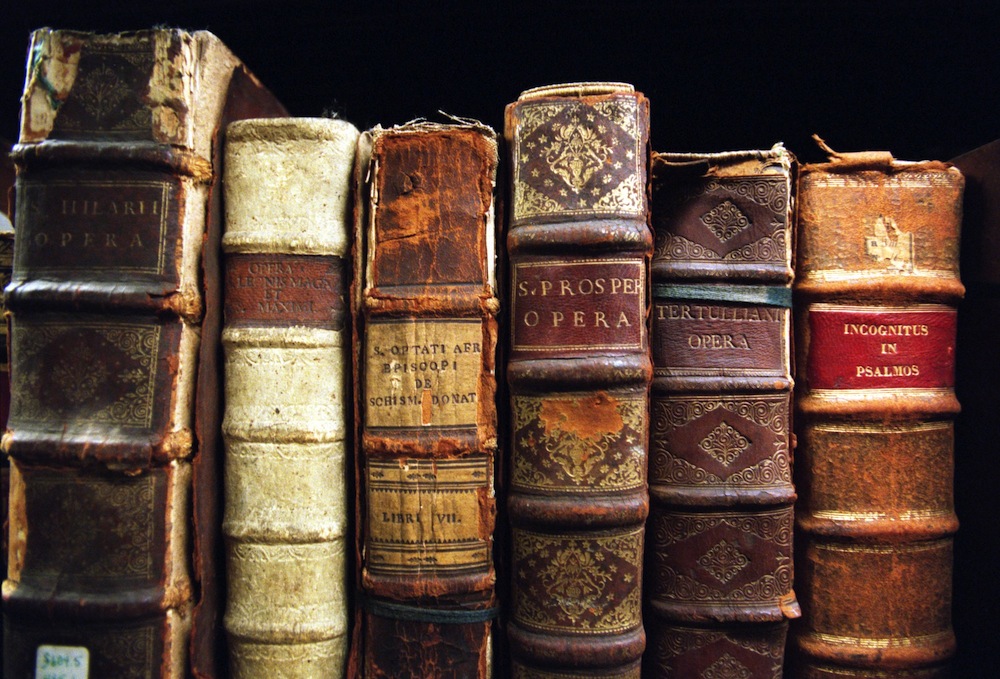

Four to five hundred years ago, natural science was born, originally called: natural philosophy. Philosophy already had existed for thousands of years, but with the advent of a change of “consciousness” in human nature in general, something arrived which a few thinkers and writers on this question called: the onlooker separation. The best source for this is the works of the thinker and philologist Owen Barfield (a member of the Inklings, with C.S. Lewis, J.R.R. Tolkein and Charles Williams).

Barfield observes the changes in the meaning of thousands and thousands of words - in many languages - over time, and then reasons these changes as revealing an underlying change in human nature at the level of what we call consciousness. This is called: the evolution of consciousness. While we generally recognize a physical evolution, we are yet not fully awake to an evolution at the level of consciousness, the very central problem/riddle for the brain-scientist. Barfield has written a number of well-recognized, thoughtful, and challenging books. For example, Barfield's book, Worlds Apart, placed in imaginary conversation various disciplines of thought, such as: a lawyer with philological interests; a young man employed at a rocket research station; a professor of physical science; a retired school master; a biologist engaged in research work; a linguistic philosopher; and, a psychiatrist, which led T. S. Eliot to describe it as: "An excursion into seas of thought which are very far from ordinary routes of intellectual shipping". The title, Worlds Apart, gives an apt description of the struggle for members of these disciplines to find ways to even be in the same conceptual universe, much less find common solutions to crucial modern questions. It was Barfield's genius of spirit that enabled him to so accurately grasp this disconnect among separate intellectual disciplines, and then unveil the resulting confusion.

Before this change of consciousness, human beings felt themselves as participating in the world of Nature, not as separate from it. Barfield gives many many details here, so this really can’t be questioned, unless we are willing to take up several years of study. The main effect for the brain-scientist is that this new knowledge, which Barfield and others produced during the 20th Century, is as yet mostly unknown to the brain-scientists. His university experiences trained him in his field of interest, but his "education" is not likely to have taught it the wider views living outside his field. Remember Eddington: "We are learning more and more about less and less".

Natural Philosophy, on its way to becoming natural science, begins (from an onlooker point of view) to take everything apart. Thus a changing evolving consciousness inaugurates the progressive effort to analyze down to the level of its smallest bits all sense objects (forms of matter), yet for a considerable time in the first couple of hundred years a religious or spiritual view remained. Our histories of science (remember Kuhn?) forget that Kepler was an astrologer, and Newton an alchemist. For a long time many early natural philosophers (scientists) were also believers in God. And, in the earliest days, the Church murdered natural philosophers if their views conflicted with Church dogma (Bruno was burned at the stake in 1600, and Galileo was forced to recant under the threat of torture and/or death).

A crucial juncture was reached around the years 1700 to 1730, when Newton and Leibniz argued about who invented the calculus, and what was the real nature of the smallest parts of matter. Newton was inclined to take the view that the smallest parts were inanimate (the “atom”), while Leibniz had the inclination to view these smallest parts (“monads”) as having consciousness and will. For both the main difficulty was that matter, in the form of the human being (as well as animals), was animated. It got up, moved around and thought and spoke. An explanation for where that “animation” came from needed to be found.

In Ernst Lehrs’ book Man or Matter, (published in 1950), Lehrs points out that some forms of matter are “inert” (don’t respond to being stimulated from the outside such as rocks) and other forms of matter are “alert” (react to being touched or stimulated).

While those questions appear to be settled for the main streams of science, especially modern physics and biology, the philosophical-logical problems remain. For example, while current theories in Big Bang cosmology and Darwinian evolution conceive of that which is alive (alert) as having been built up of matter which was originally not alive (inert), we have no way to go back in time and empirically observe such processes. In fact, we can’t find such a process anywhere in Nature today - a process whereby the living is derived from the lifeless. We only empirically observe, in presently manifesting Nature, processes whereby the living produces the lifeless.

For example, the growing and developing human being in the womb starts out alive (derived from living seed and egg), and only after a long time do the “bones” appear, which with age are more and more lifeless (stop growing and become very brittle), ultimately surviving death as ""inert" remains in burial sites. When we get wise enough, we will realize that the "living" earth has done the same thing (all rocks are extruded remains of a still living self-conscious organism existing on a planetary scale).

While some modern scientists strive to synthesize the living out of the non-living, they are doing that in a laboratory, and can nowhere point to where Nature does that as part of its normal processes. Evolutionary theory and empirical reality cannot be made to conform, which from a philosophical logical point of view, makes for a really serious problem for theory. If we are going to posit a creation process where inert matter produces alert matter, then we very much need to observe it, not just theorize (imagine) that everything had to happen that way, regardless of how many billions of years (we can't actually observe) were available.

Part of the problem, from the standpoint of the history of natural science since the 15th Century (as it arose from a change in human nature - consciousness - at that time), is that the process of analysis that arose, where physics spent a lot of time taking things apart, became more and more involved in the counting and numbering of “things”, seemingly lacking consciousness themselves. Mathematics became a powerful tool. As this tool became more dominant in the thinking of scientists (only what we can count has meaning), philosophy (with its inconvenient questions about "qualia") more and more took a back seat, as if it had nothing more to contribute. On the chessboard of science, the queen (mathematics) became more important than the king (philosophy), because the queen's powers were in a way more “sexy”.

However, the rules of the game of rational inquiry require that the king - philosophy - is the essential piece, otherwise the game is lost if the king is made immobile - or checkmated. Remove philosophy from the board and science becomes religious - a system of dogmas and beliefs, rather than a system that is rational and logical. This is in fact the present state of natural science today. Everywhere dogmas, such as observed in Brady’s article above, and most especially today in the completely unproven assumption that the material brain produces “consciousness”, an invisible quality of human nature that scientific instruments cannot even detect, but which every human being knows directly and intimately.

Let us come at this once more, perhaps a bit more poetically. Art often “sees” the world in a way neither reason or faith can alone. I know I am somewhat repeating myself, but this basic picture is important to grasp from as many different points of view as possible.

The brain scientist swims in a sea of ideas, but doesn’t notice them. He is born into this sea as he acquires language, goes to school, is raised and educated. The better his education (which is something different from mere training), the richer is his apprehension of the sea of ideas. Certainly he becomes familiar with specific kinds of individual concepts, and acquires some skill in their manipulation, something he needs in order to get his degree - the license which allows him to do research and earn a living at it. Specialized language, belonging to the discipline of brain-studies, is required in order to enter the temples wherein those arts of brain research on consciousness and thinking are celebrated.

The more trained and the less educated the individual brain-scientist is, the less rich is his ability to see the forest for the trees. He knows the neural landscape, but not the essence - the whole (for a wonderful "education" in how the part relates to the whole - to the context, go to The Nature Institute). The brain-scientist's discipline, by shunning an empirical self observation of his own consciousness, fails to notice the greater part. “Imagination is more important than knowledge. For knowledge is limited to all we now know and understand, while imagination embraces the entire world, and all there ever will be to know and understand.” (Einstein)

Sure, ... we all know this greater part, for we have many names for it, such as “human nature” and “self understanding”. But the brain-scientist, like many other scientists, is fascinated with trees (the brain cells) and comes to believe the whole - the forest - is just a complex collection of trees, without the whole being something very different in itself. Dogma and belief overwhelms reason, and the total modern paradigm built over the hundreds of years of the existence of natural science decrees in its queen-like power that all is matter, and there is no spirit. This vast sea of ideas (named by Plato, as one part of two realms: the material realm and the transcendent realm of forms.) is not noticed, for its patterns and currents (in the world of ideas) are ignored, while specialization focuses on the one object the brain-scientist assumes answers all questions: That the mind and the brain are one individual “thing” - a kind of living stuff, without any other feature. The fact that he directly himself observes the essential mystery (consciousness) in himself is forgotten. Why?

Fear.

In response, please allow me a brief rant (to be polemical):

Every human being knows they have dark places within. They know they lie and cheat and steal, and suffer and forget and experience loss and other terrors of life. But, the disciplines of the brain-scientists too has its holy grail, just as does the physicist with his worship of the “theory of everything”. Take apart the brain-structures with enough care, they seem to believe, and all of human nature will then be explained. Some think to test for and find in the matter even the source of morality, which suggests - clearly - that one should also find there the source of immorality. Poppycock.

The disciplines of Natural Science are modern. They are no more than 500 years old, and many within that temple want to believe that all ancient peoples were stupid, did not know how to reason and just made stuff up to fill in the gaps. Only modern science can unveil the real “truth”. More poppycock.

One of the ways we moderns are so much like our forebears is expressed in our hubris and our arrogance. Every Age believes it has arrived at Truth, which will be eternal for all time. What could be more arrogant than for the natural scientist to assert that he can tell us what actually happened billions of years ago, and billions of light years away in space and time. That faith in our own minds is hardly different at all from the same impulse we attribute to those who wrote the creation myths that everywhere survived from the deeps of time. We accused the ancients of inventing stuff, when (we assume) they could not explain what they experienced. Yet we do the same - when we don’t know (can't empirically observe the past), we invent stuff, which we call "scientific theories", to fill in the gaps. Pray tell, what is the real difference between the great Myths of humanity and the grand Theories of modern natural science? If we read Barfield, he would explain these differences as arising from different kinds of consciousness, which have evolved parallel to all human development. The ancient Greek did not have a consciousness like ours, nor did the ancient Sumarian have a consciousness like the ancient Greek.

I am not my brain, whatever the brain-scientist with his dogmas and beliefs wants to assert. Everyday of life proves this to me, and I don’t need some faceless group wearing white lab coats and torturing monkeys to tell me otherwise. They need to get some authentic “education”, and rediscover what the ancient wise have always said: “oh man, know thyself”.

The whole evolution of language, with all its open secrets, defies the assertion of some brain-scientists that there is no I - that self-consciousness is not real. The personal pronoun is everywhere, and even the brain-scientists could not get through a day without appearing essentially insane, if they didn’t use: I, you, we, us, and so forth. It is totally hypocritical to assert there is no self, and yet go through life using the very aspects of language and grammar that have no meaning unless our perception of self is real. Human nature is not the stupid.

Such brain-scientists are like a new version of drug addicts, drunk on what they seem to conceive is some kind of special knowledge about human nature, when all they have done is lost sight of the vast integrated contexts in which all knowledge arises. Excessive knowledge of the details of parts consumes our wisdom/intelligence and makes us incapable of seeing anymore the whole.

What reality is there to quarks and muons and bits and pieces of stuff, that only appear in an experiment that smashes tiny bits of matter together at outrageous speeds - a process which Nature never uses. A flower does not need a super-collider to blossom. And, the mind does not need neurons running fantasy quantum events in order to create poetry.

End rant.

The English language has the word “mind”. At the time of Freud and his friends, when they were exploring the unconscious and other phenomena seeking to explain human behavior, ... - the Europeans, particular the Germans, used the words geistes (spirit) and seele (soul) to reference the “interior” life (consciousness territory) of human beings. Jung even posited that there was a collective or common aspect living in the unconscious - which had transcendent characteristics. In his book Freud and Man’s Soul, Bruno Bettelheim points out that when Freud’s works were translated into English (in the 19th Century), even though English had both “spirit” and “soul” as words, those kinds of references in Freud were translated as “mind”. We can call this a slight materialization of the idea of mind, one which moves away from any idea of spirit (transcendent form), and begins to more firmly root itself solely in matter.

As science developed further, during the 20th Century, the concept/term “mind” became even more materialized and was replaced, as it is today, with the concept/term “brain”. As well, a kind of split arose in academia, whereby the physical scientist of the brain became less and less able to have a meaningful conversation with a psychologist, for the latter could not treat his patients unless he believed in the interior life and in the free will of the individual to grow and heal from emotional trauma. Arguments among some philosophers and brain-scientists have, at the same time, become more polarized.

For a delightful example of a passionate philosopher trying to deal with the various things scientists tells him must be true, some of which he fully believes (principally, that the brain produces consciousness), watch Professor John Searle : Consciousness as a Problem in Philosophy and Neurobiology - the lecture runs about 57 minutes: https://www.youtube.com/watch?v=6nTQnvGxEXw

In that lecture Searle refers to what he calls: dualism, and attributes to Descartes as being a prime exponent of this view of there being something other than matter, which might be called soul or spirit. Searle casually dismisses such a view, considering it obviously flawed and unreasonable. We could say that in the use of the word dualism in such a negative context, modern philosophers so inclined stepped fully into a materialistic explanation for "mind", i.e. that mind or consciousness had to clearly and obviously be product of the biology of the brain. This was to be the only conclusion possible.

All of this was connected to a tendency in biology to only see material causes for all aspects of the phenomena we call “life”, eventually resulting in the present-day conception that the human being is only matter, and never spirit. In a way, what was known to the explorers of the unconscious as an interior aspect of human nature (the territory) became then over time something that was itself a kind of illusion produced by a material “brain” (the map).

The reader needs to realize that above is very superficial. Books could be written, and have, which go into great detail providing a solution to the problem of dualism. Their main present-day feature is that they are little known outside of small circles, where individuals are more open to spiritual truths and practices. In effect there has arisen a kind of counter-Copernican revolution, involving the Romantics and the Transcendentalists, and now Rudolf Steiner's Anthroposophy (c.f. Man or Matter, by Ernst Lehrs; History in English Words, Owen Barfield; Art and Human Consciousness, Gottfried Richter). Barfield even wrote a book about Anthroposophy, called: Romanticism Comes of Age.

Let us remind ourselves here: The brain-scientist does not see thoughts or consciousness. These are invisible to his instruments. Only the sometimes presumed illusory individual-self knows consciousness and thoughts directly. A very important point to recognize here is that the brain-scientist too has an interior life (a territory), and seeks to explain that territory by creating a complex map, which, whatever else it may do, remains only a kind of outer surface. The reality of a real territory - an “interior” - is trying to be denied. Again, why?

One way to answer that question is to see that there is a kind of war going on at the leading edges of science, between a fully materialistic conception of a human being, and a more humanistic/spiritual conception. Perhaps this war is between abstract thinkers, and intuitive feelers. The former are more disconnected, and the latter seek out connection. What the humanist might call “I and Thou”, the abstract intellect sees only as “things” and “its”.

A good example is the difference in their relationship with Nature, between aboriginal peoples and modern peoples influenced by natural science. Nature has no interior for the latter, while it has a transcendent interior for the former.

~~~~~~~~~

The strange/funny aspect of all this is that the disconnected abstract thinker also has children and spouses, and co-worker relationships. Does he treat his family and colleagues as things and its? As if they were not persons, but only the product of a piece of meat called a brain, however complex? What kind of politics does a piece of meat have?

We have some interesting language phrases that are illuminating here. Someone might say: “I have a gut feeling”, possibly meaning they trust their intuitive connected thoughts. While another might say: “Its only in my head”, meaning they doubt the abstract disconnected thought.

To bring this into a more concrete situation here, let us describe and contemplate recent brain-scientist experiments that are alleged to prove there is no free will. The below in italics is from Wikipedia, and I have inserted a small comment inside [brackets]:

Neuroscience of free will refers to recent neuroscientific investigation of questions concerning free will. It is a topic of philosophy and science. One question is whether, and in what sense, rational agents exercise control over their actions or decisions. As it has become possible to study the living brain, researchers have begun to watch decision making processes at work. Findings could carry implications for moral responsibility in general. Moreover, some research shows that if findings seem to challenge people's belief in the idea of free will itself then this can affect their sense of agency (e.g. sense of control in their life). ...

... Relevant findings include the pioneering study by Benjamin Libet and its subsequent redesigns; these studies were able to detect activity related to a decision to move, and the activity appears to be occurring briefly before people become conscious of it. Other studies try to predict a human action several seconds early. Taken together, these various findings show that at least some actions - like moving a finger - are initiated unconsciously [“unconsciously” is a curious term to use here], at first, and enter consciousness afterward.

In many senses the field remains highly controversial and there is no consensus among researchers about the significance of findings, their meaning, or what conclusions may be drawn. It has been suggested that consciousness mostly serves to cancel certain actions initiated by the unconscious, so its role in decision making is experimentally investigated. Some thinkers, like Daniel Dennett or Alfred Mele, say it is important to explain that "free will" means many different things; these thinkers state that certain versions of free will (e.g. dualistic) appear exceedingly unlikely, but other conceptions of "free will" that matter to people are compatible with the evidence from neuroscience.

“Unconsciously” above, as an observation, comes from the fact that scans detect neural activity before the individual in the experiment reports to the research scientist that they have made a choice of some kind of which they were “self-aware”. The reasoning seems to be, that if the brain is active first in time, before the “individual”(?) reports a choice (second in time), that the brain (the matter by “itself”) is the initiating cause and the seemingly self-aware individual is in one way or another out of the causal loop. On occasion, in some experiments, the measure of the “gap” in time is only milliseconds.

Keep in mind that we are not actually observing the highly complex mental (brain?) activity of someone driving a car in traffic, or making love. The brain-scientist has to make his experiments very simple, such as asking someone to move a finger. Hidden in plain sight in the experiment is the assumption that the only causal element is the brain in some way or another. Consciousness is assumed to be rooted in the material brain, and that view is hardly questioned any more at all, except in some odd corners of philosophical inquiry. Not only that, but whatever “individuality” turns out to be, that too is rooted in the brain.

Part of the offered explanation is that because there are billions and billions of neurons and pathways, this complexity itself somehow has to make for how consciousness works. Keep in “mind” that there is no evidence for this, it is just assumed to be true, and has resulted in borrowing all kinds of computer terminology to use as metaphors for “brain” activity. The brain stores memories like a hard drive. The mind runs sub-routines to carry out bodily activity. The wet-ware - the brain - has hard wired software provided by millions of years of evolution. In the near future, we will be able to upload our individual memories and consciousness to computer storage, and live in essentially immortal robotic bodies (the singularity).

When I first encountered this philosophical/religious/scientific question in the late 1980's and early 1990's, I read a lot of neuroscience and cognitive science. It was very clear in these readings that the mind/body dualism problem had been bascially set aside, although at that time, the '80's and '90's, scientists seemed to still notice that this conclusion that consciousness was rooted in the material brain was a working assumption. Since those decades, I no longer encounter any recognition of the assumption character of this conception. For details, see my essay The Idea of Mind: a Christian meditator considers the problem of consciousness. That consciousness is rooted in the material brain has, as with Darwinian evolution and speciation, become a unchallenged dogma in these fields of inquiry.

The basic working assumption in modern experiments then is that if the scans of the brain showed activity before the individual reports it, the brain - the matter - is somehow itself “acting”. Few other causal views are argued or considered. Forgotten, almost always, is the fact that the brain scan does not actually see either thought or choices or consciousness itself. That the brain is assumed to be the seat of these common human experiences is apparently no longer questioned.

To repeat, for this is important. Brain activity in certain already “mapped” regions is observed with a device. Then the individual reports that they made a choice. The actual choice, or what really inwardly happened within consciousness, when the brain activity was observed, is not seen. Not seen, but inferred; and, inferred in accord with the unproven, now dogmatic, assumption that consciousness is rooted in a physical material organ.

As this will naturally be asked, let me offer a very brief and different "explanation" for what the brain scientist sees:

First recall that if we move our mind's attention toward a hand, this additional attention will cause warmth to arise in that hand. Blood flow also can be increased by moving our attention's focus to a single part of the body. Now picture the possibility that "spirit", a transcendent feature of consciousness, moves free of the body ... hovers around it in a kind of way, but not in physical space. So when tasked (or stimulated) this "spirit/attention" uses the brain as an interface between its normal non-spacial realm and the physical realm, much like imagined in the film Avatar, when the consciousness of an individual is moved from their body to the manufactured body. As this "spirit/attention" is drawn toward the physical brain on the way to achieving the task which the research scientist asked the "I" (or spirit) of the experimental subject to do, the spirit/attention dips into the matter in the region of the brain previously mapped as habitually used for certain tasks. Also keep in "mind" the speeds at which this all happens. There is an important riddle hidden in that fact.Arguments at this level could go on forever, and most philosophers don't even know the relevant ideas described just above, which can be used as an alternative explanation. Thus, even many philosophers are trapped in the endlessly repeating argumentative nature of the discussions, as evidenced in the previous Wikipedia article, with its last comment repeated, here:

This "dipping in" is what the brain-scientist's instrument sees - the natural warming due to the movement of the attention, drawing the blood into that region. The subject was also asked to report to the research scientist when they accomplished the asked for task. This is actually a second task, and requires of the subject that they become active in the region of the brain associated with speech. There are then two tasks, one the action and the second the report.

Now in order to accomplish the first task, the inward (in the territory of consciousness) activity of the subject is an inner action of which they are not likely to be consciously aware. Why? Because the attention was not tasked with watching itself act out the first task. Only to do the first task - not to watch itself do the first task. In a few paragraphs we will take a brief look at those disciplines that deal with the task of self-watching, a rather peculiar inner activity, which is not normally done. Doing and watching-doing are two different acts. Anyway, it is the time gap between the doing and the reporting that is observed by the research scientist. The brain itself never "acted". Only the "I" or spirit acted, using the brain as an interface between its non-physical realm and the physical realm.

What is then not "seen", or understood, is that the unconscious aspects of this are just that: unconscious. That term only means that the cognitive-self does not notice what it does instinctively, because it is more focused on the doing, than on the watching-itself doing. This pretty well describes a great deal of the activities in which we engage, which Searle's lecture seeks to portray in this question: How does this consciousness act in the physical world? But the sea of ideas in which nearly everyone swims today, only conceives of the "animation" of the "alert" matter of the body as accomplished by the brain-organ of the physical body itself. There is no other "actor", as when Freud used the older terms for mind: soul and spirit.

In many senses the field remains highly controversial and there is no consensus among researchers about the significance of findings, their meaning, or what conclusions may be drawn. It has been suggested that consciousness mostly serves to cancel certain actions initiated by the unconscious, so its role in decision making is experimentally investigated. Some thinkers, like Daniel Dennett or Alfred Mele, say it is important to explain that "free will" means many different things; these thinkers state that certain versions of free will (e.g. dualistic) appear exceedingly unlikely, but other conceptions of "free will" that matter to people are compatible with the evidence from neuroscience.Perhaps something is missing. Perhaps we are confused precisely because we can't be other than confused (the snake of the raw intellect eats its own tail). Perhaps, in throwing out dualism (the mind/body distinction), we threw out the baby with the bathwater.

~~~~~~~~~

What is missing is a recognition of the real implications of the fact that to each actor in this play - whether scientist or philosopher or experimental subject - all have a direct experience of consciousness itself. The very complexity of the “object”, the brain-scientist is unable to measure with his scans and instruments, is visible to every human being. What does not happen is an investigation of this territory, which is not an aspect of the very limited brain-map - actual consciousness is far more complicated than the structures of the brain. So, an old question resurfaces ... dualism is back, but perhaps a Way past its prior difficulties has been found.

Here we get to something rather remarkable, because the fact is that human beings have been studying their own consciousness for millenia. This embarrassment of riches, however, is a problem for the brain-scientist, because it defies the underlying assumption of mainstream science, in that these riches perceive not just matter, but what has to be called spirit as well.

Here are some names: Yoga, the Tao - or Way of Lau Tzu, Tibetan and Zen Buddhism, Kabballah, Sufism, Gnosticism, Tarot, Alchemy, Rosicrucianism, Hermeticism, Hermetic Christianity, Romanticism, Transcendentalism, and Anthroposophy. Go to any serious New Age store, or any store or library with a wide selection of “spiritual” books, and its all there. Studies of human inner life - or “consciousness”, with all manner of ways of understanding and practices in which the individual student can engage.

These do not all agree on the details. Some even claim to be non-theistic (i.e. no need for the idea of God). Yet, all posit a aspect of consciousness that is transcendent of matter. Why are these “sciences” of consciousness, that work with the actual territory and not the maps, ignored? This is a really good question.

Owen Barfield, in his book Speaker’s Meaning suggests that the minds of modern Western educated scientists encounter, in their fields of interest, contemporary kinds of taboos. If scientists, working in evolutionary biology or big bang cosmology, are forced somehow to admit that there might be matter and spirit, then all those theories have to be rethought. The huge theoretical edifice of modern materialistic natural science gets a very big flat tire - several in fact. A scientist who offers a spiritual explanation will risk being shunned within his own community.

What is at stake here?

Basically, the truth. If science is no longer interested in the truth, then it has just become another religion competing with other religions. If it is the truth that scientists desire, then any competing theory, even one which posits spirit as real, has to be on the table. The discussion has to shift, and the cutting edge of that need to shift is in the brain-sciences, for here a kind of natural limit in the search for knowledge has been reached.

You cannot, without being a complete hypocrite, ignore the fact that consciousness is only visible to the individual (the map is not the territory), and that this territory has already been studied by individuals, successfully, for thousands upon thousands of years.

What is also funny/strange/odd is that the same limit was encountered by physics during the 20th Century, when it had to start to consider the possibility that “consciousness” participated in the shaping of quantum events. Scientific materialism has run into the limits of the possibilities it has created for the “explaining” of the world - and that limit is this still unanswered question: What actually is consciousness and how do we come to scientific knowledge of its real nature and actual influence.

Outer space is very far away. But inner space is not. Dare the brain-scientists go where none of them seem to have gone before? A territory well explored for hundreds of centuries? Aspects of the whole future of human existence may well depend on getting this one right

As someone who has empirically studied their own mind for four decades, I can assure you it is an adventure well worth taking. There are in fact, real maps to the territory, that can help the introspective investigator begin his journey. Here is just one example, Rudolf Steiner's book: The Philosophy of Spiritual Activity, which has the sub-title: "some results of introspection following the methods of natural science." This is a much better map to the territory, than that produced by brain-scientists, because it actually accepts the basic existential fact that consciousness is an open book to each individual that has the courage to take up the task, and observe empirically what stands within himself (again, the ancients: know thyself).

A few brief observations/conclusions, from my own investigations of the territory of the mind as inspired by Steiner's various "maps":

Thinking (spiritual activity) can observe its after effects in the consciousness (the soul), and thereby come to self-knowledge through empirical self-observation. Consciousness is a kind of mirror of thinking’s spiritual nature. Not all thoughts arise by our own efforts of will, but this un-willed territory too can be observed by its effects, and was previously named the unconscious.

The self-conscious thinking (concept creation) can be studied and developed like any other willed activity. Skill can become Craft can become Art. Its main will-components are the act of attention and the act of intention. That is, toward what object of thought do I direct my thinking activity (the attention), and for what reason (moral or otherwise) do I engage in that act of thinking (the purpose or the intention). The content which the method of conscious intentional thinking produces will be observed to vary according to how and in what way I vary the attention and the intention.

Awake thinking has a variety of modes, or ways of operation. These include, but are not limited to: organic thinking, pure thinking, reflection, theorizing, figuration, comparative thinking, associative thinking, picture thinking, imaginative thinking, concrete thinking, abstract thinking, warm thinking, cold thinking, thinking-about, thinking-with, thinking-within and thinking-as. The mind is an instrument which we can learn to play, and many acts of thinking can be accomplished as a kind of harmonic cord of more than one mode simultaneously.

Aspects of this play of the instrument of the mind also have to take account of feelings, or moods that can go with or otherwise drive the modes of thinking. These include: sympathy, antipathy, pain, pleasure, anger, fear, love, joy, sadness etc. Moods can also be cultivated, not just reactive (spontaneous and undisciplined). Cultivated moods include such as awe and reverence. Moods and modes can be observed working in concert, sometimes like the resonant harmonies that arise in other objects, when some particular instrument produces the primary tone.

All of this means that the qualia, which many philosophers accept, but can’t yet figure out how to approach scientifically, can be investigated empirically via the skills, crafts, and arts of self-aware thinking, which I call elsewhere: The Rising of the Sun in the Mind***, or Sacramental Thinking. "Sun" in this instance needs to be seen in the way Rudolf Steiner tried to describe our Sun. Our Sun is a kind of emptiness in physical space, a kind of hole as it were. It is a place that is not there in physical space at all. What is there is concentrated ethereal space - the kind of space at the cosmic periphery where light and life are created. What we see as the physical "Sun" is the boundary conditions where at the "edges" of the spherical hole in physical space, light and life creation forces abound, arising from concentrated ethereal space, which is of such a qualitative nature that cosmic spiritual Beings can find a home there.

[***For anyone wanting some a more personal and self-directed approach to these questions, I have created an app-like web page: "The Rising of the Sun in the Mind".]

A curious fact to keep in mind about the Sun, well known to physics, is that the Sun-body itself is not as hot as the "space" surrounding the Sun. The Sun surface (described above as the boundary condition of the transition from the physical to the ethereal) is about 6,000 degrees F. Meanwhile the "space" surrounding the Sun is one to two million degrees F. The frying pan is considerably hotter than the fire. This is one of those huge anomalies physics ignores, because it is so inconvenient to its current theories about the physical Sun.

The Rising of the Sun in the Mind then is the creation/opening of a hole in the mind-space, where the peripheral or "edge-like" conditions allow light and life forces to arise before the inner eye of the self-consciousness. Tradition calls this inner eye the third-eye, but it is not a physical eye - it is a spiritual eye. At the same time, this inner eye is creative - it makes thought. It draws/invents thought out of the realm of timeless and spaceless conditions, through the state of pure ethereal space, into the "place" of physical space, ultimately descending into the words in discursive thinking, and then into speech. It is also not "dramatic", but you might say: just the opposite. It is subtle and delicate. It is where the still small voice can speak. Where angels offer their insights, in tune with the quiet slow-motion and soundless beating of butterfly wings.

It is: Living Thinking. It uses the brain stuff in order to interact with the physical world. But it is not itself physical. The true I is not the brain. It is pure spirit, like the wind as described by John in his Gospel: "What’s born of the flesh is flesh, and what’s born of the breath is breath. Don’t be amazed because I told you you have to be born again. The wind blows where it will and you hear the sound of it, but you don’t know where it comes from or where it goes; it’s the same with everyone born of the breath”. John 3: 6-8. The soundless sound. The lightless light. The spaceless space. The lifeless life, the deathless death, and the timeless time, or ... the Eternal Now, where it is always: In the Beginning ~~~

There is a great deal more that is reported in the mostly little known modern literature on the territory of consciousness, and its major components: thinking, feeling and willing. See my website Shapes in the Fire for details, as well as references to the works of others.

An article on why the math shows that thought cannot be encoded in a computer